AI adoption in Australia is accelerating, but so is public fear, according to one expert.

It comes as 78% of Australians admitted they are concerned about negative outcomes, and only 36% trust the technology - even though companies remain largely unprepared.

A recent survey by KPMG and the University of Melbourne revealed that while 50% of Australians use AI regularly, trust remains elusive.

A separate study by the Governance Institute of Australia also found that 93% of organisations cannot effectively measure AI return on investment, and nearly half have received no formal AI training.

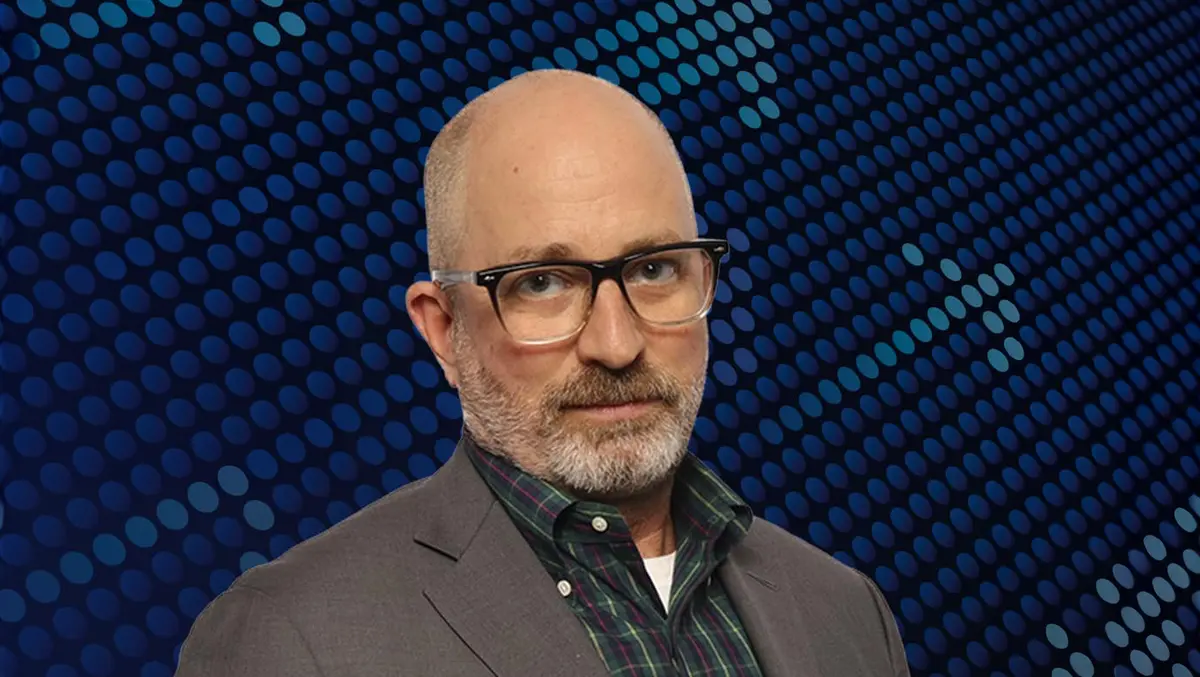

Jason Straight, Senior Managing Director at K2 Integrity and Head of its Cyber Resiliency and Digital Risk Advisory practice, argues that robust governance can accelerate, not inhibit, the safe adoption of AI technologies.

He strongly believes that organisations should treat risk as a catalyst for innovation.

"Governance controls can contribute to innovation in significant ways," he said. "One of the first steps is to establish a mechanism for identifying, assessing and approving business use cases for AI. This process alone uncovers innovation across the company that might otherwise have gone unrecognised."

Straight emphasised the importance of user training, noting that governance is not simply a gatekeeping exercise but a means to enable creativity safely.

"One of the barriers to wide adoption of AI is that users don't have a clear understanding of its capabilities and how to use the tools safely. Training diminishes this barrier, builds confidence in the technology and unlocks creativity across the employee base," he said.

He also warned against the false sense of security that can arise when companies deploy large language models (LLMs) internally.

"First of all, an AI system deployed in a secure enterprise environment creates far less risk than using a web-based public LLM," he said. "But few systems are as 'safe' as companies might like to think."

Straight cautioned that cyber resilience strategies must assume internal compromise is possible.

"An attacker might be able to use an LLM to more efficiently identify valuable data across an organisation. It is also conceivable that the LLM itself may be targeted with the goal of disrupting its operation or even taking it offline," he said.

To mitigate these risks, Straight urged organisations to apply the same diligence to AI systems as they would to other critical infrastructure.

"Activity involving AI systems should be logged and monitored to detect unauthorised access and anomalous events. Incident response plans should be updated to include steps to respond to an incident affecting AI systems," he explained.

Organisations must also be ready to pause AI systems temporarily without disrupting business operations.

"From a resilience standpoint, that includes readiness to take an AI system down temporarily without bringing the business to a halt," he said.

Straight has observed that many AI ethics boards are either disbanded or ignored altogether. For governance to work, he said, it needs to be rooted in leadership.

"Direct, authentic and visible commitment from leadership from the start is critical," he said.

Ethics boards, he suggested, should be enablers, not enforcers. "One of the key roles of an AI ethics board is to evangelise the benefits of using generative AI across the organisation while simultaneously fostering a culture of responsible AI use," he said.

He stressed the need for feedback mechanisms and clear metrics.

"Clear mechanisms to collect and respond to feedback from stakeholders will help in making sure the board is viewed as a trusted advisor. Recognising and even celebrating success is as important to governance as policy enforcement and risk mitigation," he said.

When it comes to the intersection of cybersecurity and model performance, Straight believes many organisations are focusing too narrowly on data leakage. "While this is the most obvious and arguably the most damaging category of risks, there are other less visible threats to consider," he said.

One of the most serious issues, he explained, is the tendency of generative AI tools to fabricate.

"The biggest flaw of current generative AI tools is their tendency to fabricate and mislead - and to do so articulately, confidently and persuasively. Users must remain sceptical of any AI output," he said.

He warned that flawed AI output could erode data quality over time.

"If flawed AI output seeps into an organisation's data stores, the more likely it is that other users or subsequent AI inferences will repeat or propagate the flaws," he said.

Straight also addressed the issue of "Responsible AI" being reduced to a public relations slogan. He called for a genuine, top-down approach.

"Users recognise when leadership is sincere in their commitment and when governance is a 'check-the-box' exercise that may be ignored or circumvented," he said.

To navigate legal grey zones, he advised companies to proactively eliminate ambiguity.

"The best way to do that is to embrace transparency, explainability and accountability across all AI use-cases," he said.

Although legal precedent is still emerging, Straight warned that failing to disclose AI-generated decisions could lead to liability.

"It seems likely that failure to clearly disclose when and how generative AI is used will get companies in trouble," he said.

He admitted that "no matter how powerful the technology", humans remain ultimately responsible for its use.

"AI-generated output must always be carefully reviewed and validated by a qualified human before it is shared internally or externally," he said. "Anything less can lead to an erosion of trust with the market."